A (possible) framework for determining what AI should, and should not, be used for

Let's proceed wisely and thoughtfully, shall we?

Like everyone else, the new artificial intelligence craze has gotten me thinking, a lot, about what its role will be in my life. As a college professor, it’s been a topic of particular consternation to my colleagues and I as we determine what our approach towards the use of AI in our classrooms and assignments should be. This has led me to wonder how we can delineate between what AI is good for, what it’s bad for, and what it absolutely should not be used for, on a broader societal level. And I’ve come up with a potential framework that some might find useful as we progress forward collectively with these AI tools.

The framework begins with three yes or no questions that one can apply to a specific activity or task (the more specific, the better):

Are humans better at it? Y/N

Would it create a negative AI feedback loop if humans never did it at all? Y/N

Is the use of AI likely to exacerbate existing significant social problems in the affected arena(s)? Y/N

Of course, none of these questions are easily answerable, but if you could do a test to see if humans or AI outperform the other on a certain task, that could help you answer question 1. It is important to note, however, that “better” necessarily must include higher quality. If an AI can do it quicker but the output is not as well done (as in putting together a literature review, for instance), that cannot be considered better. In addition, this question may need to be revisited from time to time, as AI will get better at certain tasks over time.

Questions 2 and 3 are more thought experiments, but they can be puzzled through. Consider something like driving, for instance. If we got to a point where no humans were driving any cars, ever, would that create a negative feedback loop in any way? No. But academic research is a good example of something we would not want to be done without any human involvement at all, as we’d lose all access to new insight, innovation, progress, and original thought. What about question 3: Would the use of AI exacerbate existing social problems with driving (e.g. accidents, drunk driving, texting while driving)? Assuming it was good at it, then I don’t think so. But for the existing social problems within academic research (publish or perish, high pressure environment, idea laundering, academic bullying), it is in fact very likely to do so.

Another good example is advising students on which classes they should take each semester in order to complete their major. Although AI may, on the whole, be better at advising students, if no humans were ever involved, would that create a negative feedback loop? It is likely that it would, as there are often cases which require exception, thought, and consideration, and the most probable solution is not always the best one. Additionally, the existing problems with advising, such as a lack of personal attention, students feeling like they are treated like a number, and students not taking responsibility for making sure that they are completing their own curriculum on time, would likely be exacerbated by the use of an AI.

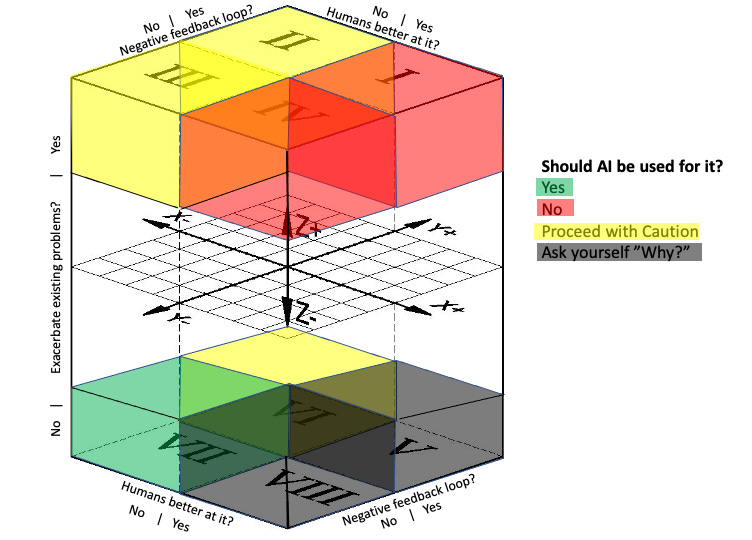

Once arrived at, the answers to the three questions will populate one of 8 locations in a 3 dimensional 2 x 2 x 2 matrix, as shown below, and the resulting answer will be either “Yes,” AI can be used, “No,” AI should not be used, “Proceed with caution,” or “Ask yourself why.”

The final categories, along with their suggested responses, are also listed below:

I (+++): Humans are better at it, it would create a negative feedback loop if humans never did it, and use of AI is likely to exacerbate existing social problems = NO

II (-++): Humans are not better at it, it would create a negative feedback loop if humans never did it, and use of AI is likely to exacerbate existing social problems = PROCEED WITH CAUTION

III (--+): Humans are not better at it, it would not create a negative feedback loop if humans never did it, use of AI is likely to exacerbate existing social problems = PROCEED WITH CAUTION

IV (+-+): Humans are better at it, it would not create a negative feedback loop if humans never did it, use of AI is likely to exacerbate existing social problems = NO

V (++-): Humans are better at it, it would create a negative feedback loop if humans never did it, use of AI is not likely to exacerbate existing social problems = ASK YOURSELF WHY

VI (-+-): Humans are not better at it, it would create a negative feedback loop if humans never did it, use of AI is not likely to exacerbate existing social problems = PROCEED WITH CAUTION

VII (---): Humans are not better at it, it would not create a negative feedback loop if humans never did it, use of AI is not likely to exacerbate existing social problems = YES

VIII (+--): Humans are better at it, it would not create a negative feedback loop if humans never did it, use of AI is not likely to exacerbate existing social problems = ASK YOURSELF WHY

Obviously, it’s not a perfectly precise mechanism. While the answers “Yes” and “No” are quite clear, the other two responses are more ambiguous. The extent to which you should proceed in those cases will really depend on the potential problems you identified in questions 2 and 3 and how sure you are that you thought it all through.

“Proceed with caution” could mean anything from “watch out for these pitfalls” (as in something like writing a business plan) to “proceed with extreme caution, and course correct immediately upon the first sign of these dire consequences” (as in medical diagnoses). I’ve also considered being more conservative and making everything that is likely to exacerbate existing social problems also “No,” but, considering there is such a wide range of possible factors that could be influencing the answers to these questions (and sometimes the trade-off in practice is more positive than we thought), I figured I’d leave it more open to individual judgment.

“Ask yourself why” applies to those categories in which the AI is not actually better at a certain task than humans, and there may or may not be some anticipated problems. For these situations, it’s really a question of whether or not the use of AI has any other particular benefit that could be worth the tradeoff for any potential consequences (considered or not). If the answer to the “why” is good enough, then proceed with caution. If not, don’t proceed with AI in this task.

Content moderation is a good example. Humans may be better at discerning text-based violations of a platform's policy than an AI, and the consequences for political speech could be too great if AI is given that kind of power over civic discourse. So, there, the answer would be “No.” But for something like flagging images of sexual abuse or graphic violence, it may be worth the acceptance of potentially more errors in judgement to preserve the mental health of employees who would otherwise be tasked with looking at them. (This example also underscores why it’s important to be as specific as possible when thinking through tasks.) Other trade-offs that might be considered include things like speed, efficiency, or entertainment (as in AI-generated art). But, again, the “Ask yourself why” response can only revert to “Proceed with caution” or “No.”

I’ve begun thinking about things I’d like my students to learn and running them through this framework. For example, I want them to be able to critically think. Humans, I would say, are better than AI at this, it would be a very bad negative feedback loop if humans never did it all, and the use of AI is definitely likely to exacerbate social problems that already exist around a lack of critical thinking more broadly. So, that’s a category I, +++, and the answer is a hard no. But what about something like writing a press release? AI is actually quite good at that and in fact can speak in a corporate voice better than a human if you train it on company documentation. Would it create a negative feedback loop if humans never wrote press releases? I don’t think so. The existing problems with press releases include things like a lack of transparency, intentionally biased and positive framing for the organization they were written about, and verbatim use in “news” reporting. These problems, in fact, might be exacerbated with the use of AI. So, I need to really think about whether or not I want to allow my students to use AI for this task and make an extra effort to emphasize and warn them about these issues and problems if I do. What about transcribing interviews? Are humans better at doing that than AI? Not really. Would it create a negative feedback loop if humans never did it? No. Are there existing social problems that would likely be exacerbated if we used AI for this task? Can’t think of any. So, this is a good use of AI for my students.

I have also tried applying this framework to other tasks outside of my professional wheelhouse, thinking about things like nursing home care (NO), mapping genome sequences (Yes!), song-writing (Ask yourself why), and accounting (proceed with caution), and so far it seems to work pretty well. So I thought I’d put this out there in the world and see what others think, as I continue developing it.